Hi, I' m Mayank

I specialize in optimizing models and deploying

AI-driven solutions for real-world impact.

About

I am a Machine Learning Engineer and Data Scientist My focus lies in designing scalable machine learning models, automating workflows with MLOps, and optimizing systems for production deployment. Currently, I am focused on leveraging generative ai and llms to solve complex challenges while ensuring models are scalable, efficient, and production-ready.

Projects

Check out my work

I've worked on a range of machine learning projects. Here are a few that showcase my expertise and dedication to the field.

On Screen - Real-Time Autonomous Mobile Agent

Built an Android AI agent that can see the phone screen, understand voice commands, and complete multi-step mobile tasks in real time. The cloud branch uses model APIs for fast autonomous screen control, while the on-device branch explores private local inference with speech-to-text, a vision-language model, and text-to-speech running directly on the phone.

Speech-to-Text Transformer from Scratch

Built a complete Speech-to-Text Transformer model from scratch using PyTorch, converting raw audio waveforms into text without pre-trained models. Implements convolutional downsampling, multi-head self-attention, Residual Vector Quantization (RVQ), and CTC loss for alignment-free training. Trained on the LJSpeech dataset using an A100 GPU.

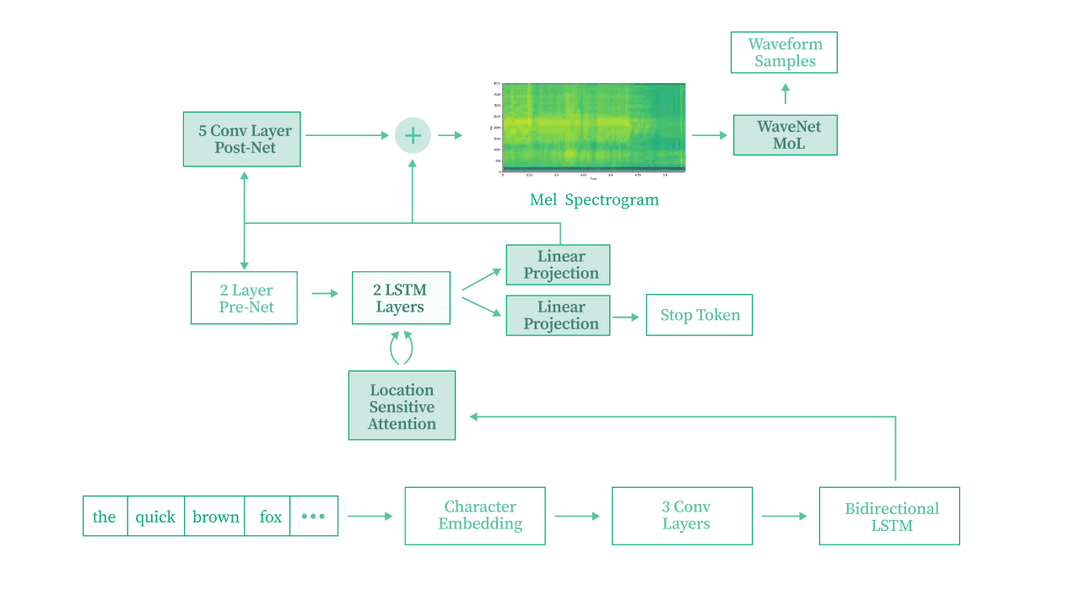

Text-to-Speech (Tacotron 2) from Scratch

Implemented a Tacotron 2 neural text-to-speech model from scratch in PyTorch. The model generates mel-spectrograms from raw text input using an encoder-decoder architecture with attention mechanisms, then converts them to audio waveforms. Trained on the LJSpeech dataset.

SHADE-Gym - 1.5B Sabotage Monitor with GRPO

Built an OpenEnv-native scalable oversight gym for hidden sabotage detection, inspired by SHADE-Arena. A frozen DeepSeek-R1 attacker executes hidden side tasks inside deterministic Python sandboxes, while a Qwen2.5-1.5B LoRA monitor is trained with TRL GRPO and verifiable rewards to flag sabotage from public tool-call traces, reaching 0.893 AUROC with 0.88 recall and 0.12 FPR.

API Testing RL Environment for OpenEnv

Built an OpenEnv reinforcement-learning environment where agents test a deliberately buggy REST task-management API. The environment includes 13 planted vulnerabilities mapped to OWASP API Security Top 10, seed-randomized data, deterministic bug detectors, a 5-signal reward function, and automatic OWASP-style bug bounty reports.

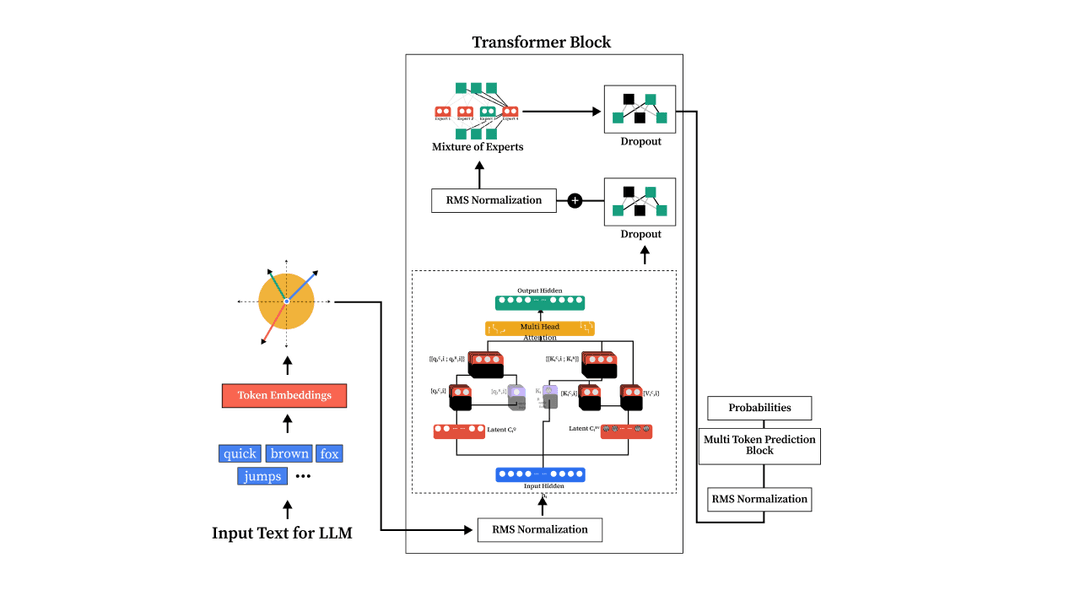

DeepSeek V3 LLM from Scratch in PyTorch

Implemented the complete DeepSeek V3 architecture from scratch, a 100M+ parameter transformer featuring Multi-Head Latent Attention (MLA), Mixture of Experts (MoE), and Multi-Token Prediction (MTP). Trained on the FineWeb-Edu dataset with ~2.5B tokens on an NVIDIA A100 80GB GPU.

Fine-Tuning Large Language Models (LLMs)

Fine-tuned various open-source LLMs including LLaMA 2, Mistral, Qwen, and vision-language models for domain-specific tasks. Leveraged efficient methods like LoRA, QLoRA, and quantization using Unsloth and Hugging Face.

Large Language Model (LLM) from Scratch

Implemented a Large Language Model (LLM) from scratch, covering every stage from data preparation and model architecture to pretraining and fine-tuning. This project demystifies transformer-based models through hands-on code and experiments, enabling a deeper understanding of attention mechanisms and token prediction.

US Visa Approval Prediction using MLOps

Built an end-to-end MLOps pipeline to predict the approval status of US visa applications. Implemented machine learning models, deep learning techniques, and automated deployment pipelines.

Network Security - Malicious URL Detection using MLOps

Developed an end-to-end MLOps project to detect malicious URLs using XGBoost. Integrated robust pipelines for data ingestion, model training, deployment, and monitoring.

Customer Satisfaction Prediction using ZenML

Predicted customer satisfaction scores for future orders using historical e-commerce data from the Brazilian E-Commerce Public Dataset by Olist. This project leverages multiple machine learning models like CatBoost, XGBoost, and LightGBM, built within a ZenML pipeline to create a production-ready solution.

Latest Blogs

Loading blogs...

Some of My Lectures

A visual guide to Word Embeddings

Jul 9, 2025

Dive deep into the fascinating world of word embeddings and discover how computers transform text into meaningful numbers!

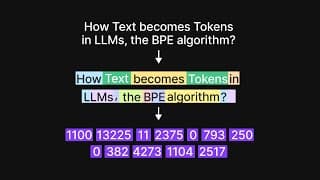

A visual introduction to tokenization in LLMs | Byte Pair Encoding Algorithm

March 13, 2025

In this video, I have explained tokenization in Large Language Models (LLMs) in a visual manner.

Skills

Get in Touch

Want to chat? Just shoot me a dm with a direct question on twitter and I'll respond whenever I can. I will ignore all soliciting.